Most B2B organizations are optimizing for the wrong signals.

They’re building beautiful content, running smart campaigns, and watching their traditional metrics look fine while AI systems quietly route qualified buyers to their competitors.

The gap isn’t effort. It’s understanding.

AI platforms like ChatGPT, Perplexity, and Google’s AI Overviews don’t evaluate authority the way humans do. They’re not impressed by clever copy or emotional storytelling. They’re running statistical pattern recognition across millions of data points, looking for specific signals that reduce their risk of recommending the wrong answer.

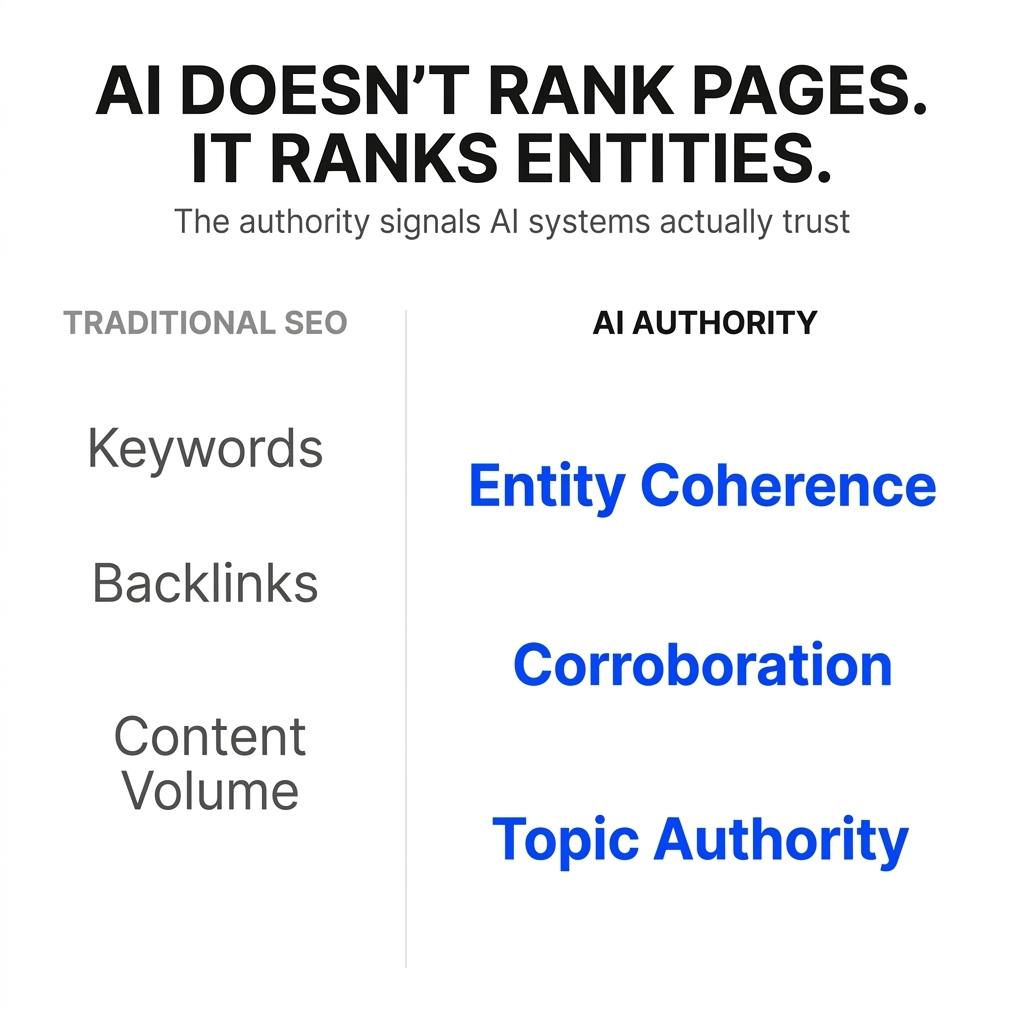

Dr. Patrick McAvoy’s doctoral research on AI-driven discovery revealed something the marketing industry was completely missing: AI systems rank entities, not pages. And the signals that make an entity “trustworthy” to an algorithm are fundamentally different from what makes content persuasive to a person.

Here’s what the research actually shows about which authority signals carry weight in algorithmic evaluation, and why traditional digital marketing infrastructure fails to register in these systems.

The Shift from Relevance to Credibility

Traditional search prioritized relevance. If your page matched the query and had decent backlinks, you had a shot at ranking.

AI systems prioritize credibility as the primary decision factor.

According to research on AI Overview ranking factors, 96% of AI Overview citations come from sources with strong E-E-A-T signals. Pages with 15 or more recognized entities have a 4.8-fold higher selection probability.

This represents a fundamental shift in how visibility is earned. You’re no longer competing to be the most relevant result. You’re competing to be the most credible entity the system is willing to bet on.

Entity Coherence: The Foundation Signal

The first thing AI systems evaluate is whether you exist as a coherent entity at all.

Entity coherence means: same company name, consistent product descriptions, aligned bios for key people, and clear topical focus across every surface where you appear.

When your brand is described inconsistently, split across domains, or overshadowed by partners and resellers, the model doesn’t see a single high-confidence node. It sees fragments.

You can have hundreds of strong pages, but if your brand identity is fragmented, AI systems can’t resolve you as a stable entity worth recommending. The content exists. The entity doesn’t.

This is why traditional page-level SEO often fails in AI-mediated discovery. You’re optimizing individual assets while the system is trying to understand who you are as a unified presence.

Corroboration: Independent Validation at Scale

AI systems don’t trust what you say about yourself nearly as much as what independent sources say about you.

Corroboration works through statistical pattern recognition. When multiple credible sources describe you in similar ways, using consistent language about your category, expertise, and track record, the model’s confidence increases.

A brand with 10 high-quality editorial citations may earn more AI citations than a brand with 500 directory links. The difference is independence and consistency.

According to research on AI search trust signals, traditional SEO authority built through backlink volume operates on a different logic than AI search trust. AI systems evaluate corroboration from independent, credible sources, not raw link counts.

One beautifully persuasive case study that lives as an unstructured PDF with inconsistent naming is powerful for humans but weak as a machine signal. The AI can’t easily extract “this company reliably solves X for Y” from it.

Topic-Conditional Authority Profiles

Source authority isn’t a single score. It’s a topic-conditional evaluation.

A medical journal has high authority for health queries but low authority for automotive questions. AI models maintain topic-conditioned authority profiles and use them to filter retrieval candidates.

This means you can be very “big” in general, but weak in the one narrow category a specific query is about. Traditional marketing often chases volume. AI cares whether you’re a reliable specialist for the specific question it’s answering.

The research shows that AI models use topic-conditional authority to decide which entities are safe to recommend for which problems. Your general brand strength matters less than your demonstrated expertise in the exact domain of the query.

The Human-Written Content Advantage

Studies show that human-written content gets 5.4 times more traffic than AI-generated content.

This isn’t about AI systems being anti-AI. It’s about authentic markers of expertise that AI-generated content struggles to replicate naturally.

Algorithms now prioritize “Experience” signals, such as “In my testing” or “When I managed this budget.” These first-hand expertise markers are harder for generative systems to fake convincingly.

Smaller brands and niche publishers that consistently demonstrate this expertise can surface just as strongly as legacy outlets that merely summarize others’ expertise. The system is looking for earned pattern recognition, not just polished aggregation.

Semantic Completeness and Structured Data

Content scoring above 8.5 out of 10 for semantic completeness is 4.2 times more likely to appear in AI Overviews.

Semantic completeness means: clear schema, entity markup, and unambiguous associations between brand, offerings, and proof. It’s about making your content machine-readable, not just human-persuasive.

Traditional infrastructure optimizes copy that persuades people through emotional hooks and clever angles. AI systems evaluate structure and consistency: can they extract clear facts, verify claims across sources, and attach outcomes to your entity with high confidence?

A page can be beautifully designed and conversion-optimized for humans while being nearly invisible to AI systems because the underlying structure is weak.

The Consistency Signal Across Platforms

Users trust AI-generated answers 2.7 times more when AI references verifiable, consistent digital sources.

Digital information inconsistencies can reduce AI output accuracy by 30-40%, even with well-trained models.

This is why entity coherence across platforms matters so much. When your company description varies wildly between your website, LinkedIn, Crunchbase, and industry directories, you’re not just creating brand confusion. You’re actively reducing the AI’s confidence in its recommendation for you.

The system sees conflicting signals and treats you as a higher-risk answer.

Reputation Research as Verification

Google’s Search Quality Raters are explicitly instructed to perform “reputation research.” They manually search for an author’s name to see whether they’re quoted as experts on other reputable sites, whether they’ve been involved in controversies, and whether their credentials can be verified in official third-party databases.

AI systems are doing a version of this at scale. They’re cross-referencing identities across multiple platforms before determining credibility.

According to research on E-E-A-T and AI, a high volume of users searching for “[Author Name] + [Topic]” signals to the algorithm that the author is a recognized leader. Search behavior itself becomes a credibility marker that AI systems interpret.

The Zero-Click Reality

AI summaries are driving up zero-click behavior with documented CTR declines ranging from 15% to 80%, depending on query type and vertical.

Total referral sessions from all LLM platforms combined amount to only about 2% to 3% of the organic traffic Google alone delivers.

Yet the strategic implications are profound.

When AI systems answer questions directly, being cited in that answer becomes more valuable than ranking on page one of traditional search results. You’re not competing for clicks anymore. You’re competing for credibility inside the answer itself.

The Growth Trajectory

In March 2025, about 13.14% of all search queries triggered an AI Overview display. That’s twice as many as in January of the same year.

The exponential growth shows how quickly algorithmic answer generation is becoming the default search experience.

This isn’t a future trend to watch. It’s a current reality that’s doubling every few months.

Why Traditional Infrastructure Fails

Traditional digital marketing infrastructure was built for human decision-makers, not algorithmic credibility filters.

The mismatch shows up in three ways:

Campaign-centric operations versus graph-centric reality. Your operations are organized by campaign calendar. The AI’s view of you is cross-campaign and long-lived. Frequent repositioning and inconsistent messaging create a noisy, unstable footprint that weakens your node in the graph.

Human persuasion versus machine-readable authority. You optimize copy that persuades people once they arrive. AI systems evaluate whether there’s a stable entity with clear schema, unambiguous associations, and corroborated claims across many sources.

Channel metrics versus assistant decision criteria. You measure CTR, CPC, and MQL volume. AI systems care about whether they can answer the user completely using their internal knowledge, and which entities produce low complaint rates when recommended.

You might be winning clicks and MQLs on the open web, but if the AI’s training history doesn’t have you as a low-risk, high-satisfaction entity, you don’t get pulled into the answer set.

The Entity-Level Authority Shift

This marks a shift from page-level SEO to entity-level authority.

It’s no longer just about whether a page is optimized. It’s about whether your brand is trusted across the web.

AI systems now cross-reference identities across multiple platforms before determining credibility. According to research on trust signals, this represents a fundamental change in how brands need to think about their digital presence.

You can’t game this with a single perfect landing page or a clever campaign. The system is evaluating your entire footprint, looking for consistent patterns that reduce its risk of being wrong.

What This Means for B2B Organizations

Most B2B brands can get found if someone searches their exact name. That’s findability.

Being the default answer means the system volunteers you as one of the safest options even when users don’t know you exist.

The difference is this: findable means “if someone already knows to look for you, the system can retrieve you.” Default means “even if they don’t know you exist, the system recommends you as a trusted option.”

In traditional search, being findable was often enough. Users saw pages of links and could click around to build their own shortlist.

In AI-mediated discovery, users see one answer box and a tiny handful of names. The assistant’s job is to pre-compress the market into “here’s who matters for your situation.”

If you’re only findable, you show up after the shortlist, if the buyer already knows to ask for you. If you’re the default, you shape the shortlist itself.

The Research-Backed Path Forward

The research is detailed on what AI systems prioritize:

Entity coherence across all platforms. Corroboration from independent, credible sources. Topic-conditional authority in your specific domain. Semantic completeness and structured data. Consistent signals that reduce algorithmic risk.

These aren’t the signals most B2B organizations have been optimizing for. They’re not the metrics in your current dashboard. They’re not what your agency reports on.

But they’re what determine whether AI systems are willing to bet on you when a qualified buyer asks for help.

The window for establishing this kind of authority is narrowing. AI systems are already locking in their defaults. Every week of usage reinforces existing patterns. The earlier you become the recommended answer for your category, the more your footprint gets queried, clicked, cited, and linked as an example.

That creates a feedback loop that’s very hard for late movers to break.

The question isn’t whether AI-mediated discovery is coming. It’s already here, doubling every few months. The question is whether you’re engineering the authority signals these systems actually trust, or just hoping your traditional marketing will somehow translate.

The research shows it won’t.